Recently, Room B307 of the Information Engineering Building at Nanchang University was filled with a strong academic atmosphere. The School of Artificial Intelligence invited Professor Zhou Yipeng from Macquarie University to deliver a special academic report on large model acceleration technology. Faculty and student representatives from the school gathered at the event to jointly explore the development trends and application prospects of cutting-edge artificial intelligence technologies.

Frontier Focus: Core Exploration for Efficient Operation of Large Models

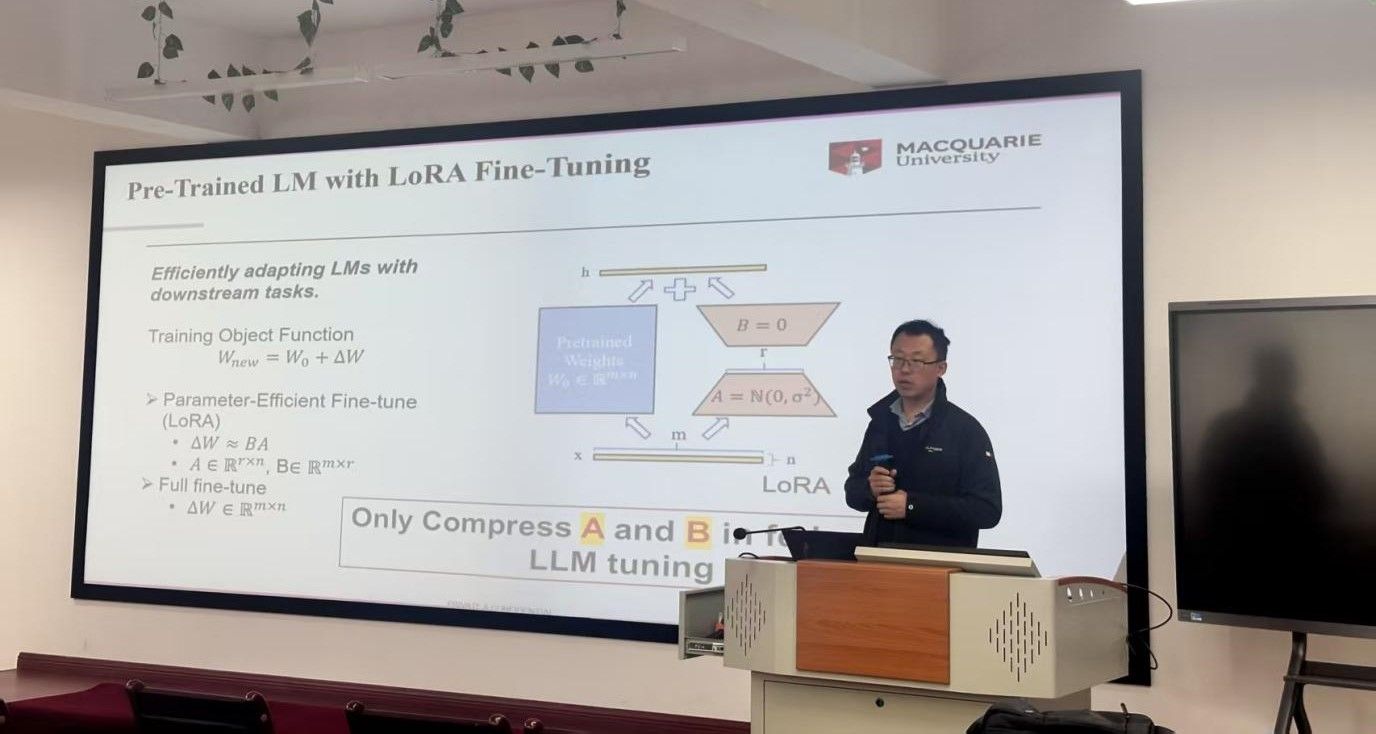

Professor Zhou Yipeng has long been deeply engaged in the field of artificial intelligence, with abundant academic achievements. His research team's explorations in the field of large model optimization hold significant industry influence. The report focused on the core issues of efficient large model operation, pointing out that current large model applications face practical challenges such as high storage costs and substantial computational overhead. Model compression technology provides a key approach to breaking through these bottlenecks—through scientific optimization methods, lightweight deployment of large models can be promoted while ensuring core performance, making it one of the key areas of industry focus.

Technical Analysis: Model Compression and Federated Fine-tuning

The report also analyzed the application value of federated large model fine-tuning technology. As an important training method in privacy computing scenarios, its characteristic of "data stays put, models move" provides a secure solution for collaborative training with multi-source data, particularly suitable for scenarios with high data security requirements. Professor Zhou Yipeng emphasized that the development of large model technology needs to balance "performance assurance" with "adaptation to actual needs," and related technological explorations must be designed based on specific scenarios. The practicality of his team's results has been validated through practice.

Academic Interaction: Broadening Research Horizons through Dialogue

The exchange session following the report was lively. Faculty and students engaged in in-depth discussions on issues such as "scenario adaptability of model compression technology," "directions for large model optimization," and "application challenges of federated learning." Participants indicated that the report clarified the core logic in the field of large model acceleration, providing important inspiration for subsequent scientific research.

This event is an important initiative by Nanchang University to build a high-level academic platform. In the future, the school will continue to deepen academic exchanges and scientific research cooperation in the field of artificial intelligence, contributing to disciplinary development and high-quality industrial progress.

Reviewed by: Zeng Qingwei, Xu Zichen, Luo Yan